Data Cleansing with SQL and R

Kevin Feasel (@feaselkl)https://csmore.info/on/cleansing

Who Am I? What Am I Doing Here?

Dirty Data

What is dirty data?

- Inconsistent data

- Invalid data

- Incomplete data

- Inaccurate data

- Duplicate data

Philosophy

The ideal solution is to clean data at the nearest possible point. In rank order:

- Before it gets into the OLTP system

- Once it is in the OLTP system

- ETL process to the warehouse

- Once it is in the warehouse

- During data analysis

Not all systems follow OLTP => DW => Analysis, so it is valuable to know multiple techniques for data cleansing.

Motivation

Today's talk will focus on data cleansing within SQL Server and R, with an emphasis on R. In SQL Server, we will focus on data structures. In R, we will focus on the concept of tidy data.

This will necessarily be an incomplete survey of data cleansing techniques, but should serve as a starting point for further exploration.

We will not look at Data Quality Services or other data provenance tools in this talk, but these tools are important.

Agenda

- High-Level Concepts

- SQL Server - Constraints

- SQL Server - Mapping Tables

- R - tidyr

- R - dplyr

- R - Data and Outlier Analysis

Rules of Thumb

- Impossible measurements (e.g., count of people over 500 years old) should go. Don't waste the space storing that.

- "Missing" data (e.g., records with some NULL values) should stay, although might not be viable for all analyses.

- Fixable bad data (e.g., misspellings, errors where intention is known) should be fixed and stay.

- Unfixable bad data is a tougher call. Could set to default, make a "best guess" change(!!), set to {NA, NULL, Unknown}, or drop from the analysis.

Agenda

- High-Level Concepts

- SQL Server - Constraints

- SQL Server - Mapping Tables

- R - tidyr

- R - dplyr

- R - Data and Outlier Analysis

Relational Data Quality Tools

- Normalization

- Data types

- Primary key constraints

- Unique key constraints

- Foreign key constraints

- Check constraints

- Default constraints

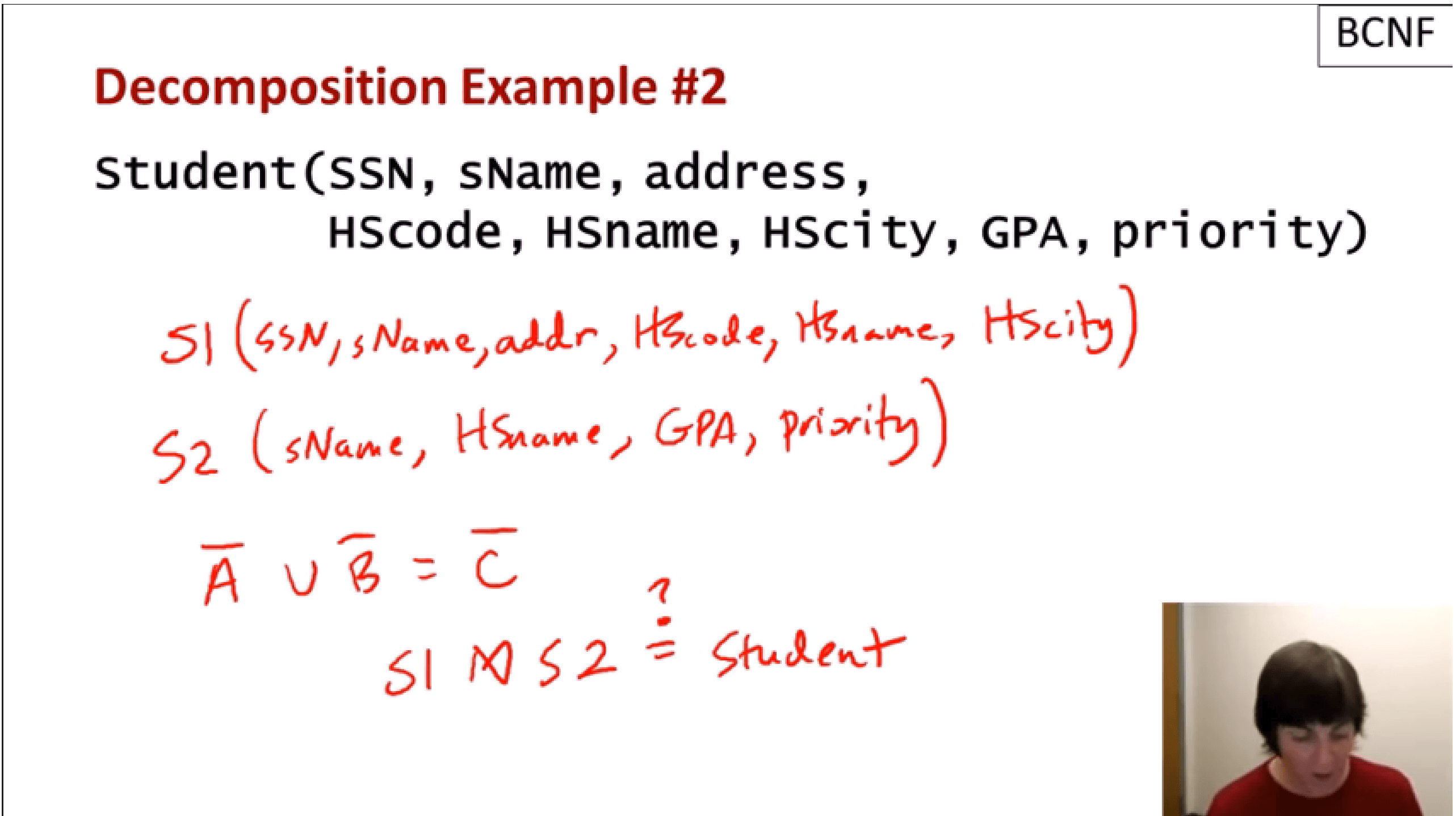

Normalization

When in doubt, go with Boyce-Codd Normal Form.

First Normal Form - consistent shape + unique entities + atomic attributes

Boyce-Codd Normal Form - 1NF + all attributes fully dependent upon a candidate key + every determinant is a key.

Data Types

Think through your data type choices.

- Use the best data type (int/decimal for numeric, date/datetime/datetime2/time for date data, etc.)

- Use the smallest data type which solves the problem (Ex: date instead of datetime, varchar(10) instead of varchar(max))

Constraints

Use constraints liberally.

- Primary key to describe the primary set of attributes which describes an entity.

- Unique keys to describe alternate sets of attributes which describe an entity.

- Foreign keys to describe how entities relate.

- Check constraints to explain valid domains for attributes and attribute combinations.

- Default constraints when there is a reasonable alternative to NULL.

Demo Time

Agenda

- High-Level Concepts

- SQL Server - Constraints

- SQL Server - Mapping Tables

- R - tidyr

- R - dplyr

- R - Data and Outlier Analysis

Mapping Tables

One technique for creating higher-level categories for items in transactional data is to use a mapping table: an external relationship made without directly modifying the primary data.

This can be a new (nullable) column on an existing table or a foreign key to a new table with the mapping relationhship.

Demo Time

Agenda

- High-Level Concepts

- SQL Server - Constraints

- SQL Server - Mapping Tables

- R - tidyr

- R - dplyr

- R - Data and Outlier Analysis

What is Tidy Data?

Notes from Hadley Wickham's Structuring Datasets to Facilitate Analysis

- Data sets are made of variables and observations (attributes and entities).

- Variables contain all values that measure the same underlying attribute (e.g., height, temperature, duration) across units.

- Observations contain all values measured on the same unit (a person, a day, a hospital stay) across attributes.

More on Tidy Data

Notes from Hadley Wickham's Structuring Datasets to Facilitate Analysis

- It is easier to describe relationships between variables (age is a function of birthdate and current date).

- It is easier to make comparisons between groups of attributes (how many people are using this phone number?).

- Tidy data IS third normal form (or, preferably, Boyce-Codd Normal Form)!

tidyr

tidyr is a library whose purpose is to use simple functions to make data frames tidy. It includes functions like gather (unpivot), separate (split apart a variable), and spread (pivot).

Demo Time

Agenda

- High-Level Concepts

- SQL Server - Constraints

- SQL Server - Mapping Tables

- R - tidyr

- R - dplyr

- R - Data and Outlier Analysis

dplyr

tidyr is just one part of the tidyverse. Other tidyverse packages include dplyr, lubridate, and readr.

We will take a closer look at dplyr with the next example.

Demo Time

Agenda

- High-Level Concepts

- SQL Server - Constraints

- SQL Server - Mapping Tables

- R - tidyr

- R - dplyr

- R - Data and Outlier Analysis

Data and Outliers

Using tidyr, dplyr, and some basic visualization techniques, we can perform univariate and multivariate analysis to determine whether the data is clean. We will focus mostly on univariate and visual analysis in the following example.

Demo Time

Wrapping Up

This has been a quick survey of data cleansing techniques. For next steps, look at:

- SQL Server Data Quality Services

- Integration with external data sources (APIs to look up UPCs, postal addresses, etc.)

- Value distribution analysis (ex: Benford's Law)

Wrapping Up

To learn more, go here:

https://csmore.info/on/cleansing

And for help, contact me:

feasel@catallaxyservices.com | @feaselkl

Catallaxy Services consulting:

https://CSmore.info/on/contact